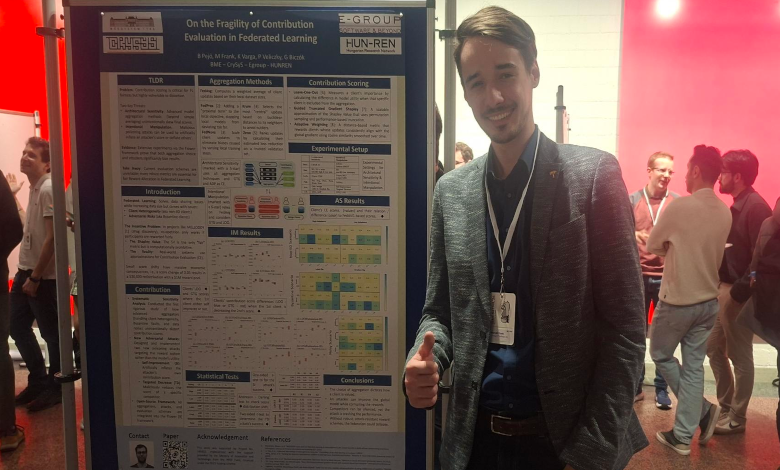

At one of the world’s only conferences dedicated to the security of machine learning systems, research co-authored by E-Group was presented to an international audience — a pivotal moment for the company.

A stage built for the hardest problems in AI

Every year, researchers from across academia and industry gather at the IEEE Symposium on Security and Trustworthy Machine Learning (also known as SaTML) to confront one of the defining challenges of our era: as machine learning systems grow more capable and more pervasive, how do we ensure they remain safe, fair, and resistant to attack? Hosted this year by TU Munich, SaTML is the international conference dedicated to both the offensive and defensive dimensions of ML security. Featuring over 60 speakers across three days, the event unites researchers from top-tier institutions like MIT and industry giants such as Microsoft, bringing together the world’s leading academic and corporate minds. The conference maintains a selective peer-review process, and acceptance reflects well-founded scientific work.

The research: when contribution scores can’t be trusted

The paper presented at SaTML investigates a subtle but consequential vulnerability at the heart of federated learning: contribution evaluation. Amongs others, it is the result of a collaboration between Marcell Frank from E-Group and Balázs Pejó from CrySyS Lab. Federated learning is an increasingly important paradigm that allows multiple parties to collaboratively train a machine learning model without moving their raw data. Contribution scores – metrics to capture how much each participant improved the model – are central to making this system fair and incentivising honest participation. But what if those scores themselves can be manipulated?

“We rigorously show that both the choice of aggregation method and the presence of attackers can substantially skew contribution scores highlighting the need for more robust contribution evaluation schemes.”

The research identifies two distinct attack surfaces. First, architectural sensitivity: the paper demonstrates that different model aggregation methods can unintentionally but significantly distort contribution scores, even with no malicious intent present. Second, and more alarmingly, intentional manipulation: through so-called poisoning attacks, malicious participants can strategically craft their model updates to inflate their own scores or deflate the scores of others.

The findings were validated through extensive experiments across diverse datasets and model architectures, ensuring the results hold across a range of real-world conditions.

Key findings at a glance

- Standard contribution evaluation is fragile under both benign architectural variation and adversarial conditions.

- Advanced aggregation methods designed to improve robustness can unintentionally alter fairness outcomes.

- Poisoning attacks could be adopted by malicious participants to game contribution scoring scheme towards their advantage.

- The research calls for a new generation of contribution evaluation schemes that are attack-aware by design.

The presentation was delivered by Balázs Pejó, Ph.D. – an assistant professor at CrySyS Lab and Research Lead at E-Group. Such dual role (bridge cutting-edge academic research with applied security work within the industry) is what turns frontier research into real-world capability.

A contribution, not just a presence

Participation in SaTML reflects E-Group’s broader approach to AI: not only keeping pace with developments in the field, but contributing to them. As a co-author in a project spanning three institutions, the only paper from Eastern Europe at the conference shifts E-Group from a regional player relevance to the international stage. The research will be published in a special edition of an IEEE journal alongside other studies from the conference, adding a peer-reviewed publication to the company’s growing research portfolio. For clients and partners, it is a concrete indicator that E-Group’s engagement with machine learning security goes beyond awareness; it is grounded in active, published research.